Use these pages to discover Linux-specific workflows, troubleshooting steps, and utilities tailored for your environments.

This is the multi-page printable view of this section. Click here to print.

Linux

- 1: Administration

- 1.1: Linux Boot/Shutdown Quick-Analyzer

- 1.2: How Antivirus Software Can Prevent Linux Boot: Troubleshooting Guide

- 1.3: Understanding IO Delays in Linux - Performance Testing with io-delayer

- 1.4: Streamlining Linux Diagnostics with SOSParser

- 1.5: Linux Benchmarking Made Easy with Tux Toaster

- 2: Kernel

1 - Administration

Find automation helpers and reference material that streamline Linux administration tasks.

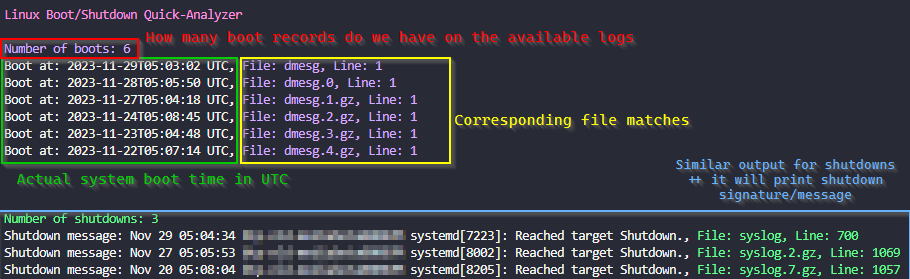

1.1 - Linux Boot/Shutdown Quick-Analyzer

What is Linux Boot/Shutdown Quick-Analyzer and why do you need it?

The Linux Boot/Shutdown Quick-Analyzer is a Python script that analyzes Linux boot and shutdown logs.

It makes it easy to find boot and shutdown events in system logs, providing information such as the number of boot and shutdown events, along with the timestamp, filename, and line number for each event.

How to run it

You need to have Python 3 installed on your machine.

To use the script, navigate to the directory containing your logs (usually /var/log) and run the following command:

# Make sure you are working inside your system logs dir

cd /var/log

# Execute the script

curl https://raw.githubusercontent.com/samatild/linuxrebootcheck/main/linuxrebootcheck.py | python3

Special arguments for special Serial Console logs!

What about those logs that are not in the usual format?

To analyze Serial Console file, you need to download the script and use the –serial-console-log argument followed by the filename:

curl -O https://raw.githubusercontent.com/samatild/linuxrebootcheck/main/linuxrebootcheck.py

python3 linuxrebootcheck.py --serial-console-log <your_log_file>

Conclusion

Overall, the Linux Boot/Shutdown Quick-Analyzer is a useful tool for analyzing Linux boot and shutdown logs. It provides a quick and easy way to find boot and shutdown events in system logs, making it easier to troubleshoot issues and identify potential problems. If you’re looking for a simple and efficient way to analyze your system logs, give it a try!

Source

1.2 - How Antivirus Software Can Prevent Linux Boot: Troubleshooting Guide

Understanding Antivirus Boot Interference

Antivirus software, while crucial for system security, can sometimes interfere with the Linux boot process. This occurs when security modules become overly aggressive during system initialization, potentially causing boot failures, readonly filesystem mounts, or service startup issues.

Common Symptoms

- System fails to boot completely

- Filesystem mounts as readonly (

ro) instead of read-write (rw) - Critical services fail to start

- Boot hangs at specific points

- SELinux/AppArmor policy violations during boot

Filesystem Readonly Issues

One of the most common problems occurs when antivirus software causes the root filesystem to mount readonly. This prevents the system from writing critical boot files and can halt the initialization process.

Root Cause Analysis

Antivirus software often implements filesystem integrity checking or real-time scanning that can interfere with:

- Journal replay during filesystem mounting

- Metadata updates during boot

- Temporary file creation in

/tmp,/var,/run

Example Scenarios

Scenario 1: Journal Corruption Detection

[ 12.345678] EXT4-fs (sda1): INFO: recovery required on readonly filesystem

[ 12.345678] EXT4-fs (sda1): write access unavailable, cannot proceed

[ 12.345678] EXT4-fs (sda1): recovery failed, mounting readonly

Scenario 2: Real-time Scanner Blocking Writes

[ 15.678901] systemd[1]: Failed to start Local File Systems.

[ 15.678901] systemd[1]: Dependency failed for Remote File Systems.

[ 15.678901] mount[1234]: mount: / cannot be mounted read-write

Recovery Steps

- Boot into recovery mode or single-user mode:

# At GRUB menu, press 'e' to edit

# Add 'single' or 'recovery' to kernel parameters

linux /boot/vmlinuz-... ro single

- Check filesystem integrity:

# Run filesystem check

fsck -f /dev/sda1

# If issues persist, check dmesg for antivirus-related messages

dmesg | grep -i "antivirus\|security\|scanner"

- Temporarily disable antivirus during boot:

# For systemd-based systems, mask the service temporarily

systemctl mask antivirus-service-name

systemctl reboot

Linux Security Modules (LSM) Conflicts

Linux Security Modules (LSM) provide the framework for security subsystems like SELinux, AppArmor, and various antivirus solutions. When multiple LSMs are active or improperly configured, they can conflict during boot.

LSM Architecture Overview

LSM hooks into the kernel at critical points:

- Process creation and execution

- File access operations

- Network operations

- Memory management

Common LSM Boot Conflicts

SELinux + Antivirus LSM:

- Both may attempt to enforce policies on the same resources

- Race conditions during policy loading

- Conflicting access decisions

AppArmor Profile Loading:

[FAILED] Failed to load AppArmor profiles

[FAILED] apparmor.service: Main process exited, code=exited, status=1/FAILURE

Troubleshooting LSM Issues

- Check LSM status:

# View active LSMs

cat /sys/kernel/security/lsm

# Check SELinux status

sestatus

# Check AppArmor status

apparmor_status

- Boot with permissive mode:

# For SELinux

linux /boot/vmlinuz-... selinux=0

# For AppArmor

linux /boot/vmlinuz-... apparmor=0

- Review security logs:

# Check audit logs for LSM denials

ausearch -m avc -ts boot

# View journal for security module errors

journalctl -b | grep -i "security\|lsm\|selinux\|apparmor"

CrowdStrike Falcon Sensor Boot Issues

CrowdStrike Falcon Sensor is a common enterprise antivirus solution that can cause boot problems when misconfigured. The sensor requires proper licensing and network connectivity to function correctly.

The Critical Error

When CrowdStrike Falcon Sensor fails during boot, you may see:

[FAILED] Failed to start CrowdStrike Falcon Sensor.

This failure can cascade into other issues:

- System may continue booting but without security protection

- Network services may fail if the sensor blocks them

- Filesystem operations may be restricted

Root Causes

- Missing or invalid license

- Network connectivity issues during sensor initialization

- Conflicting security policies

- Outdated sensor version

- Improper installation or configuration

Immediate Fix: Masking the Service

When the CrowdStrike service fails and blocks system access, you can temporarily mask it to allow the system to boot:

# Check the exact service name

systemctl list-units --all | grep -i crowdstrike

# Mask the service to prevent automatic startup

sudo systemctl mask falcon-sensor

# Reboot the system

sudo systemctl reboot

Permanent Solutions

- Verify licensing:

# Check CrowdStrike status

/opt/CrowdStrike/falconctl -g --cid

# If CID is missing, contact your administrator

- Update sensor:

# Update CrowdStrike sensor

/opt/CrowdStrike/falconctl -s --update

# Or reinstall if update fails

- Network configuration:

# Ensure DNS resolution works

nslookup falcon.crowdstrike.com

# Check proxy settings if applicable

env | grep -i proxy

- Configuration validation:

# Check sensor configuration

/opt/CrowdStrike/falconctl -g --tags

/opt/CrowdStrike/falconctl -g --version

Prevention Best Practices

- Test updates in staging environments

- Maintain current licensing

- Monitor sensor health regularly

- Have rollback procedures documented

General Troubleshooting Framework

Boot Analysis Steps

- Collect boot logs:

# View current boot logs

journalctl -b

# Save logs for analysis

journalctl -b > boot_logs.txt

- Identify the failing component:

# Check failed services

systemctl --failed

# Review systemd boot timeline

systemd-analyze blame

- Isolate antivirus components:

# List security-related services

systemctl list-units --type=service | grep -E "(security|antivirus|falcon|clamav)"

# Temporarily disable for testing

sudo systemctl stop antivirus-service

sudo systemctl disable antivirus-service

Recovery Options

Option 1: Clean Boot

- Disable all non-essential services

- Boot with minimal security modules

- Gradually re-enable components

Option 2: Recovery Environment

- Use live USB/CD for filesystem repair

- Access encrypted volumes if necessary

- Reinstall antivirus software if corrupted

Option 3: Kernel Parameters

# Boot parameters for troubleshooting

linux /boot/vmlinuz-... ro quiet splash security= selinux=0 apparmor=0

Prevention and Best Practices

System Configuration

- Proper service ordering:

# Ensure antivirus starts after critical filesystems

# Edit service files to add proper dependencies

systemctl edit antivirus-service

- Exclude system paths:

# Configure antivirus to exclude boot-critical paths

# Examples: /boot, /sys, /proc, /dev

- Regular maintenance:

# Update antivirus definitions

antivirus-update-command

# Monitor system logs for early warnings

logwatch --service antivirus

Monitoring and Alerting

- Set up log monitoring for antivirus-related errors

- Configure alerts for service failures

- Regular health checks of security components

- Documentation of emergency procedures

Conclusion

Antivirus software is essential for Linux security but requires careful configuration to avoid boot interference. Understanding LSM interactions, filesystem behavior, and specific tool requirements (like CrowdStrike Falcon Sensor) is crucial for maintaining system stability.

When issues occur, systematic troubleshooting—starting with log analysis and service isolation—usually reveals the root cause. Temporary fixes like service masking provide immediate relief while permanent solutions address underlying configuration problems.

Remember: security and stability aren’t mutually exclusive with proper planning and monitoring.

Related Resources

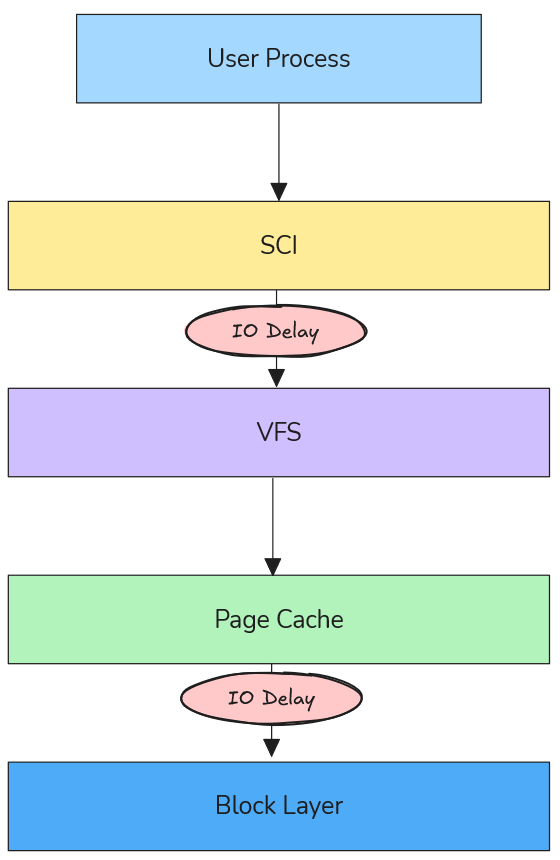

1.3 - Understanding IO Delays in Linux - Performance Testing with io-delayer

Simulate and analyze IO performance degradation at multiple kernel layers to understand system bottlenecks.

Why IO Delays Matter in System Performance

Input/Output operations form the backbone of system performance, yet they represent one of the most complex and often misunderstood aspects of Linux performance engineering. When applications experience slowdowns, the root cause frequently traces back to IO delays introduced at various kernel layers.

The io-delayer kernel module provides a controlled environment to study these delays, helping engineers understand how different types of IO interception impact overall system performance.

The io-delayer Tool

io-delayer is a Linux kernel module that demonstrates IO performance degradation by intercepting operations at two critical kernel layers:

- VFS (Virtual File System) Layer: Where path resolution and high-level file operations occur

- Block Device Layer: Where actual storage device interactions happen

Using kprobes, the module inserts configurable microsecond delays into the IO path, allowing precise measurement of performance impact across different scenarios.

VFS Layer Delays: The Path Resolution Bottleneck

Understanding VFS in the IO Stack

The Virtual File System layer sits between system calls and actual filesystem implementations. When an application calls open(), read(), or write(), these requests pass through VFS before reaching storage devices.

According to the Linux kernel VFS documentation, VFS provides a unified interface that abstracts different filesystem types while handling core operations like:

- Path name resolution (translating

/home/user/file.txtto inode references) - Permission checking

- File descriptor management

- Caching coordination

How VFS Delays Degrade Performance

VFS delays primarily impact path resolution times, which become critical in scenarios involving:

1. Directory Traversal Operations

// Every path component requires VFS resolution

int fd = open("/home/user/documents/large-dataset/file-001.dat", O_RDONLY);

Each directory in the path (home, user, documents, large-dataset) triggers VFS operations. A 100μs delay per VFS call on a deep path like /var/lib/docker/containers/... can accumulate to milliseconds.

2. Metadata-Heavy Workloads

Applications that perform many stat() calls or directory listings suffer disproportionately:

- Package managers during dependency resolution

- File browsers indexing directories

- Backup tools scanning filesystem trees

3. Process Startup Overhead

Binary execution involves resolving library paths through VFS:

# Dynamic linker resolves ~50-100 library paths per process start

execve("/usr/bin/python3", ...)

Real-World VFS Delay Scenarios

Antivirus Software Impact: Modern endpoint protection often hooks VFS operations to scan files during access. A 50-200μs delay per file operation can make boot times 2-3x slower.

Container Filesystem Overhead: Docker’s layered filesystem adds VFS traversal through multiple overlay layers. Additional delays here compound with existing virtualization overhead.

Network Filesystem Latency: NFS and CIFS clients already introduce network round-trips; VFS delays can make interactive applications feel unresponsive.

Block Device Layer Delays: Queue Congestion Dynamics

Block Device Architecture

Below VFS lies the block device layer, responsible for managing IO queues, scheduling, and hardware interaction. The Linux block IO subsystem uses:

- IO Schedulers: CFQ, deadline, noop, mq-deadline

- Request Queues: Buffering and reordering IO requests

- Elevator Algorithms: Optimizing disk head movement patterns

Queue Congestion Mechanics

Block device delays create queue congestion that affects the entire storage subsystem:

1. Request Queue Backlog

// Block device delays cause queue depth to increase

// Normal queue depth: 1-32 requests

// With delays: Can exceed 1000+ pending requests

When delays are introduced at the block layer, incoming requests accumulate faster than they can be processed, leading to:

- Memory Pressure: IO requests consume kernel memory

- Increased Latency: Requests wait longer in queue

- Throughput Degradation: Device saturation occurs at lower loads

2. IO Scheduler Inefficiency

Modern IO schedulers optimize for:

- Seek Time Minimization: Grouping nearby requests

- Fairness: Preventing starvation between processes

- Priority Handling: Real-time vs. normal priority requests

Delays disrupt these optimizations, causing schedulers to make suboptimal decisions.

3. Cascading Effects

Block delays create feedback loops:

- Delays increase queue depth

- Deeper queues increase average wait time

- Higher latency causes applications to issue more concurrent requests

- More concurrency deepens queues further

Real-World Block Device Scenarios

Storage Security Appliances: Enterprise storage systems with inline deduplication, compression, or encryption add block-level delays. Understanding these helps size hardware appropriately.

Virtualization Storage Stacks: Hypervisor storage filters, vSAN layers, and distributed storage introduce block delays that compound with virtualization overhead.

Hardware RAID Controllers: Battery-backed write caches and consistency checks add measurable delays that affect application performance expectations.

Engineering Methodology: Testing with io-delayer

Installation and Setup

# Clone the repository

git clone https://github.com/samatild/io-delayer.git

cd io-delayer

# Build and install (requires kernel headers)

make all

sudo make install

sudo make load

VFS Delay Testing

# Set 100 microsecond VFS delay

sudo ./io-delayer-cli set 100

# Test path resolution impact

time find /usr -name "*.so" 2>/dev/null | head -10

# Measure application startup time

time python3 -c "import sys; print('Python started')"

Block Device Delay Testing

# Set 500 microsecond block device delay

sudo ./io-delayer-cli set-blk 500

# Test sequential throughput

dd if=/dev/zero of=/tmp/test bs=1M count=100

# Test random IO performance

fio --name=randread --rw=randread --bs=4k --size=1G --numjobs=4 --runtime=30

Performance Analysis Techniques

1. SystemTap/BPF Tracing

# Trace VFS operations with delays

stap -e '

probe vfs.read.return {

if (execname() == "target_app") {

printf("%s: VFS read latency %d us\n", execname(), gettimeofday_us() - @entry(gettimeofday_us()));

}

}

'

2. IO Queue Monitoring

# Monitor block device queue depths

iostat -x 1

# Check /proc/diskstats for queue congestion

cat /proc/diskstats | grep sda

3. Flame Graph Analysis

# Generate IO flame graphs during testing

perf record -F 99 -a -g -- sleep 30

perf script | stackcollapse-perf.pl | flamegraph.pl > io-flame.svg

Real-World Engineering Applications

Security Software Performance Characterization

Understanding VFS delays helps security teams balance protection with performance:

# Simulate antivirus overhead

sudo ./io-delayer-cli set 150 # 150μs per file operation

# Test impact on common workflows

time grep -r "pattern" /var/log/ # Log analysis

time find /home -name "*.pdf" # Document indexing

Key Insight: 100-200μs VFS delays can make interactive applications feel “sluggish,” explaining user complaints about security software impact.

Storage System Capacity Planning

Block device delays help size storage arrays:

# Simulate storage appliance latency

sudo ./io-delayer-cli set-blk 2000 # 2ms storage latency

# Test application tolerance

fio --name=oltp_simulation --rw=randrw --rwmixread=70 --bs=8k --size=10G --numjobs=8 --runtime=300

Key Insight: Applications tolerant of 1-2ms latency can handle most enterprise storage, but 5+ms delays require architectural changes.

Container Performance Optimization

Docker and Kubernetes environments add multiple IO layers:

# Simulate container filesystem overhead

sudo ./io-delayer-cli set 50 # OverlayFS traversal

sudo ./io-delayer-cli set-blk 100 # Storage driver delays

# Test container startup time

time docker run --rm hello-world

Key Insight: Container startup times degrade linearly with VFS delays, explaining performance differences between bare metal and containerized deployments.

Kernel Implementation Details

Kprobe Integration

The module uses kprobes to intercept kernel functions without modification:

// VFS layer hooking

static struct kprobe kp_vfs_read = {

.symbol_name = "vfs_read",

.pre_handler = pre_handler_vfs_read,

};

// Block device layer hooking

static struct kprobe kp_blk_start_request = {

.symbol_name = "blk_start_request",

.pre_handler = pre_handler_blk_start_request,

};

Delay Injection Mechanism

static void inject_delay(unsigned int delay_us)

{

if (delay_us > 0) {

udelay(delay_us);

}

}

This uses udelay() for microsecond-precision delays, calibrated against the system timer.

Sysfs Interface

Runtime configuration via sysfs:

# VFS delay control

/sys/kernel/io_delayer/vfs_delay_us

# Block device delay control

/sys/kernel/io_delayer/blk_delay_us

Performance Benchmarking Best Practices

Controlled Testing Environment

- Baseline Measurement: Always establish performance without delays first

- Incremental Testing: Start with small delays (10-50μs) and increase gradually

- Statistical Analysis: Run tests multiple times, use percentiles over averages

- System Monitoring: Track CPU usage, memory pressure, and IO queue depths

Realistic Delay Values

Based on real-world measurements:

- VFS delays: 10-200μs (antivirus, DLP software)

- Block delays: 100-2000μs (storage appliances, encryption)

- Network delays: 50-500μs (WAN acceleration, proxy servers)

Performance Metrics to Monitor

# System-level metrics

vmstat 1

iostat -x 1

sar -u 1

# Application-level timing

strace -c -p $(pidof target_app)

perf stat -p $(pidof target_app)

Troubleshooting and Validation

Verifying Module Operation

# Check module loading

lsmod | grep io_delayer

# Verify kprobe attachment

cat /sys/kernel/debug/kprobes/list | grep io_delayer

# Monitor delay settings

cat /sys/kernel/io_delayer/vfs_delay_us

cat /sys/kernel/io_delayer/blk_delay_us

Performance Impact Assessment

# Before delays (baseline)

sudo ./io-delayer-cli set 0

sudo ./io-delayer-cli set-blk 0

fio --name=baseline --rw=randread --bs=4k --size=1G --runtime=30 --output=baseline.json

# With VFS delays

sudo ./io-delayer-cli set 100

fio --name=vfs_delayed --rw=randread --bs=4k --size=1G --runtime=30 --output=vfs_delayed.json

# With block device delays

sudo ./io-delayer-cli set 0

sudo ./io-delayer-cli set-blk 500

fio --name=blk_delayed --rw=randread --bs=4k --size=1G --runtime=30 --output=blk_delayed.json

# Calculate degradation - compare IOPS, latency percentiles, CPU usage

Security and Production Considerations

Important: This module is for testing purposes only. Production use can cause system instability or data loss.

- Requires root privileges for installation

- Can impact system responsiveness during high-delay testing

- Not intended for security enforcement (unlike actual antivirus software)

- MIT licensed for educational and research use

Advanced Use Cases

Custom Kernel Instrumentation

The module serves as a template for building custom IO tracing tools:

// Add custom probes for specific filesystems

static struct kprobe kp_ext4_writepages = {

.symbol_name = "ext4_writepages",

.pre_handler = custom_ext4_handler,

};

Performance Regression Testing

# Automated testing script

#!/bin/bash

for delay in 0 50 100 200; do

sudo ./io-delayer-cli set $delay

sudo ./io-delayer-cli set-blk $delay

# Run your favorite benchmark utility (fio, tuxtoaster, etc.)

echo "Testing with ${delay}us delays..."

fio --name=regression_test --rw=randrw --rwmixread=70 --bs=4k --size=1G --runtime=30 --output=results_${delay}us.json

# Or use tuxtoaster for comprehensive system testing

# tuxtoaster --category disk --test "Random IOPS R/W"

# Reset delays between tests

sudo ./io-delayer-cli set 0

sudo ./io-delayer-cli set-blk 0

sleep 5

done

Conclusion

The io-delayer kernel module provides essential visibility into how IO delays at different kernel layers impact overall system performance. By understanding VFS path resolution bottlenecks and block device queue congestion, engineers can make informed decisions about:

- Security software deployment strategies

- Storage system architecture choices

- Container performance optimization

- Application performance expectations

The tool bridges the gap between theoretical kernel knowledge and practical performance engineering, enabling data-driven decisions in complex Linux environments.

Key Takeaways:

- VFS delays primarily affect interactive performance through path resolution overhead

- Block device delays create queue congestion that impacts throughput and scalability

- Real-world delays range from microseconds (software) to milliseconds (hardware)

- Systematic testing with tools like

io-delayerprevents performance surprises

Learn More & References

- Project Repository:

github.com/samatild/io-delayer - Linux VFS Documentation:

kernel.org/doc/html/latest/filesystems/vfs.html - Block IO Subsystem:

kernel.org/doc/html/latest/block/index.html - Kprobes Documentation:

kernel.org/doc/html/latest/trace/kprobes.html

1.4 - Streamlining Linux Diagnostics with SOSParser

GitHub • Docker Hub •

Parse, analyze, and understand Linux diagnostic reports with automated intelligence.

What is SOSParser?

SOSParser is a powerful web application designed to automatically parse and analyze Linux sosreport and supportconfig diagnostic files, converting them into comprehensive, interactive HTML reports. Created to streamline the often tedious process of manually reviewing system diagnostic data, SOSParser transforms raw diagnostic archives into structured, searchable insights that accelerate troubleshooting and system analysis.

Whether you’re a system administrator, DevOps engineer, or support technician dealing with complex Linux environments, SOSParser provides an automated approach to understanding what’s happening inside your systems.

The Problem SOSParser Solves

When Linux systems encounter issues, the standard diagnostic approach involves generating comprehensive reports using tools like:

sosreport- A utility that collects detailed system information from Red Hat-based distributionssupportconfig- SUSE’s equivalent diagnostic collection tool

These reports contain thousands of files with critical system information, but analyzing them manually is:

- Time-consuming: Hours of sifting through logs, configurations, and system data

- Error-prone: Easy to miss important correlations between different system components

- Inconsistent: Different analysts may interpret the same data differently

- Repetitive: Common patterns and issues require rediscovery each time

SOSParser addresses these challenges by providing automated, intelligent analysis that surfaces key insights immediately.

How SOSParser Works

Input Processing

SOSParser accepts standard diagnostic archives in various compressed formats:

.tar.xz(most common).tar.gz.tar.bz2.tar

Analysis Pipeline

Once uploaded, SOSParser processes the diagnostic data through multiple analysis modules:

- Data Extraction: Automatically unpacks and organizes the diagnostic archive

- Content Parsing: Extracts and structures data from hundreds of system files

- Correlation Analysis: Identifies relationships between different system components

- Insight Generation: Applies heuristics and rules to identify potential issues

- Report Generation: Creates an interactive HTML report with visualizations and recommendations

What SOSParser Analyzes

System Information

- Hardware Details: CPU architecture, memory configuration, disk layout

- OS Information: Distribution, version, kernel details

- System Resources: Current utilization, capacity planning insights

System Configuration

- Boot Configuration: GRUB settings, init systems, startup services

- Authentication: PAM configuration, user management, security policies

- Services: Systemd units, cron jobs, running processes

- Security: SELinux/AppArmor status, firewall rules, package integrity

Filesystem Analysis

- Mount Points: Filesystem types, mount options, capacity usage

- LVM Configuration: Volume groups, logical volumes, physical volumes

- Disk Usage: Largest directories, file ownership patterns, permission issues

- Filesystem Health: Journal status, inode usage, fragmentation indicators

Network Analysis

- Interface Configuration: IP addresses, subnet masks, gateway settings

- Routing Tables: Static and dynamic routes, network connectivity

- DNS Configuration: Resolvers, search domains, DNS query patterns

- Firewall Rules: iptables/nftables configuration, active rulesets

- Network Services: Listening ports, connection states, network statistics

Log Analysis

- System Logs:

/var/log/messages,/var/log/syslog, journald entries - Kernel Logs:

dmesgoutput, kernel ring buffer analysis - Authentication Logs: Login attempts, sudo usage, security events

- Service Logs: Application-specific log analysis and error pattern detection

- Security Events: Failed access attempts, intrusion indicators

Cloud Services Integration

- AWS: EC2 instance metadata, IAM roles, VPC configuration

- Azure: VM extensions, resource groups, networking setup

- GCP: Compute Engine metadata, service accounts, network configuration

- Oracle Cloud: Instance details, VNICs, storage configuration

Getting Started with SOSParser

Docker Deployment (Recommended)

The easiest way to run SOSParser is using Docker:

# Pull the official image

docker pull samuelmatildes/sosparser:latest

# Run the container

docker run -d -p 8000:8000 --name sosparser samuelmatildes/sosparser:latest

Then open http://localhost:8000 in your browser.

Persisting Data

For production use, mount volumes to persist uploads and generated reports:

# Using bind mounts

docker run -d -p 8000:8000 --name sosparser \

-v $(pwd)/data/uploads:/app/webapp/uploads \

-v $(pwd)/data/outputs:/app/webapp/outputs \

samuelmatildes/sosparser:latest

# Using named volumes

docker run -d -p 8000:8000 --name sosparser \

-v sosparser_uploads:/app/webapp/uploads \

-v sosparser_outputs:/app/webapp/outputs \

samuelmatildes/sosparser:latest

Local Development

To build and run locally:

git clone https://github.com/samatild/SOSParser.git

cd SOSParser

docker build -t sosparser:local .

docker run -d -p 8000:8000 sosparser:local

Using SOSParser

Web Interface Workflow

- Upload: Select your

sosreportorsupportconfigfile (supports multiple formats) - Analyze: Click “Analyze Report” to start automated processing

- Review: Browse the generated interactive HTML report

- Export: Download reports for sharing or archival

Report Features

The generated reports include:

- Interactive Navigation: Collapsible sections, searchable content

- Visual Indicators: Color-coded severity levels for issues

- Cross-References: Links between related system components

- Recommendations: Actionable suggestions based on findings

- Export Options: PDF generation, data extraction

Common Use Cases

Incident Response

- Rapid triage of production system issues

- Correlation of symptoms across multiple subsystems

- Identification of root cause patterns

Capacity Planning

- Resource utilization analysis

- Performance bottleneck identification

- Growth trend assessment

Security Audits

- Configuration compliance checking

- Vulnerability assessment

- Access pattern analysis

Change Validation

- Pre/post-change comparison

- Configuration drift detection

- Impact assessment

Advanced Features and Roadmap

Currently Available

- Multi-format diagnostic file support

- Cloud platform detection and analysis

- Comprehensive system health scoring

- Interactive HTML report generation

Planned Enhancements

- Advanced Disk Diagnostics: SMART data analysis, ATA command integration

- Application Server Analysis: Apache/Nginx configuration parsing, database connectivity

- Container Orchestration: Kubernetes pod analysis, Docker container inspection

- Backup System Integration: Backup status validation, recovery testing

- Monitoring Integration: Prometheus metrics correlation, alerting rule validation

- Machine Learning: Anomaly detection, predictive issue identification

Performance and Scalability

SOSParser is designed to handle large diagnostic reports efficiently:

- Processing Speed: Most reports analyzed in under 2 minutes

- Memory Usage: Optimized for systems with 2GB+ RAM

- Storage: Reports typically 10-20% of original archive size

- Concurrency: Supports multiple simultaneous analyses

Security Considerations

- Local Processing: All analysis occurs locally - no data sent to external services

- Container Isolation: Docker deployment provides additional security boundaries

- Data Privacy: Sensitive information remains within your infrastructure

- Audit Trail: Processing logs available for compliance requirements

Integration and Automation

API Access

SOSParser provides REST API endpoints for integration with existing workflows:

# Upload and analyze via API

curl -X POST -F "[email protected]" http://localhost:8000/api/analyze

CI/CD Integration

- Automated analysis of system snapshots

- Regression testing for configuration changes

- Compliance validation pipelines

Monitoring Integration

- Alert generation based on analysis results

- Dashboard integration for system health overview

- Trend analysis across multiple systems

Contributing and Community

SOSParser is an open-source project that welcomes contributions:

- Bug Reports: Use GitHub Issues for problems or feature requests

- Code Contributions: Pull requests are reviewed and merged regularly

- Documentation: Help improve guides and examples

- Testing: Report compatibility with different Linux distributions

Join the community on Telegram for updates, discussions, and support.

Troubleshooting SOSParser

Common Issues

Upload Failures

- Check file size limits (typically 500MB max)

- Verify archive integrity before upload

- Ensure proper file permissions

Analysis Errors

- Confirm the diagnostic file was generated correctly

- Check for corrupted archives

- Review Docker logs for processing errors

Performance Issues

- Allocate sufficient CPU and memory resources

- Process large reports during off-peak hours

- Consider horizontal scaling for high-volume environments

Conclusion

SOSParser represents a significant advancement in Linux system diagnostics, transforming the traditionally manual and time-intensive process of analyzing sosreport and supportconfig files into an automated, intelligent workflow. By providing comprehensive analysis, actionable insights, and interactive reports, it empowers system administrators and support teams to resolve issues faster and maintain healthier Linux environments.

Whether you’re managing a single server or overseeing enterprise-scale deployments, SOSParser provides the tools needed to understand your systems at a deeper level, identify potential issues before they become critical, and maintain optimal system health.

Learn More

- Project Repository:

github.com/samatild/SOSParser - Docker Hub:

hub.docker.com/r/samuelmatildes/sosparser - Issue Tracker: GitHub Issues

1.5 - Linux Benchmarking Made Easy with Tux Toaster

Benchmark smarter, not harder — with Tux Toaster.

What is Tux Toaster?

Tux Toaster is an all-in-one performance toolkit for Linux. It triggers various load tests (“toasters”) to help you evaluate the performance and stability of your system across CPU, memory, disk, and network. It offers an interactive terminal menu with multi-select support and clear, stoppable workloads.

When to use it

- Hardware bring-up and burn-in

- Post-maintenance validation (kernel/firmware/driver updates)

- Capacity planning and instance comparison

- Performance regressions investigations

- Reproducible stress scenarios for bug reports

Requirements

Tux Toaster targets Linux and relies on:

- Python 3.8+

- System utilities:

dd,lsblk,taskset,pkill - Internet connectivity for network tests

Optional/privileged:

- Root privileges for the “Unclean GC” runaway memory test to adjust

oom_score_adj

Installation

Install from PyPI (recommended):

pip install tuxtoaster

tuxtoaster

If your shell cannot find tuxtoaster, add the install directory to PATH:

# User installs (scripts in ~/.local/bin)

export PATH="$HOME/.local/bin:$PATH"

Alternative: install from source (editable):

git clone https://github.com/samatild/tuxtoaster.git

cd tuxtoaster

pip install -U pip setuptools wheel

pip install -e .

tuxtoaster

Menu controls:

- Use arrow keys to navigate, Enter to select.

- Many submenus support multi-select; hints appear in the UI.

- Press

q,x, orEscin a menu to go back. - During tests, press Enter to stop.

Quick start

From the main menu, pick a category and test(s) to run.

CPU

- Single Core

- All Cores

- Custom Number of Cores (uses

taskset; experimental)

Memory

- Single Runaway Thread

- Multiple Runaway Threads

- Memory spikes

- Unclean GC (requires root to set

oom_score_adj)

Disk

- IOPS Reads (4K, direct I/O)

- IOPS Writes (4K, direct I/O)

- Random IOPS R/W (4K, direct I/O)

- IOPS 50-50 R/W (4K, direct I/O)

- Throughput Reads (4MB, direct I/O)

- Throughput Writes (4MB, direct I/O)

- Random Throughput R/W (4MB, direct I/O)

- Throughput 50-50 R/W (4MB, direct I/O)

- Read while write cache is getting flushed

Network

- Network IN (Single) — downloads

https://proof.ovh.net/files/100Mb.dat - Network OUT (Single) — UDP to

8.8.8.8:53 - Network IN (Multiple) — N parallel downloads

- Network OUT (Multiple) — N parallel UDP senders

- Socket Exhaustion — exhaust process/kernel sockets or reserve listener port ranges

- Simulate Latencies — local TCP proxy that injects base latency and jitter (check project Git for instructions)

Multiple tests at once

Run CPU, Memory, Network, and Disk concurrently:

- From the main menu: Multiple → select items with SPACE → Enter to start.

- Press Enter to stop all running tests.

Behaviors:

- CPU: one busy process per core (like All Cores)

- Memory: threads allocate 500 MB chunks repeatedly (requires

psutil) - Network: continuous download of

https://proof.ovh.net/files/100Mb.dat - Disk: alternating

ddread/write with 4MB direct I/O under a temp file

Reading results

Tux Toaster prints live progress and a summary when you stop a test. Disk tests create temporary files under a dedicated directory on the selected mount points and clean up on exit. Network tests report bandwidth per socket in multi-socket modes.

Tips:

- Run tests at least 3 times and use medians for comparisons.

- Keep a record of CPU governor, kernel version, microcode, and thermal state.

- Pin CPU frequency when comparing hardware to reduce variance.

Good benchmarking hygiene

- Stop noisy services (package updates, indexing, backup agents)

Troubleshooting

- Missing

psutil:pip3 install psutil - Permission errors: some memory tests and

tasksetpinning may requiresudo - Inconsistent results: check CPU governor, temperature, and background load

- Direct I/O errors: some filesystems/containers may not honor

oflag=direct

Learn more

- Project:

github.com/samatild/tuxtoaster - PyPI:

pypi.org/project/tuxtoaster - Issues/feedback: open a GitHub issue with your logs and command line

2 - Kernel

Find automation helpers and reference material that streamline Linux administration tasks.

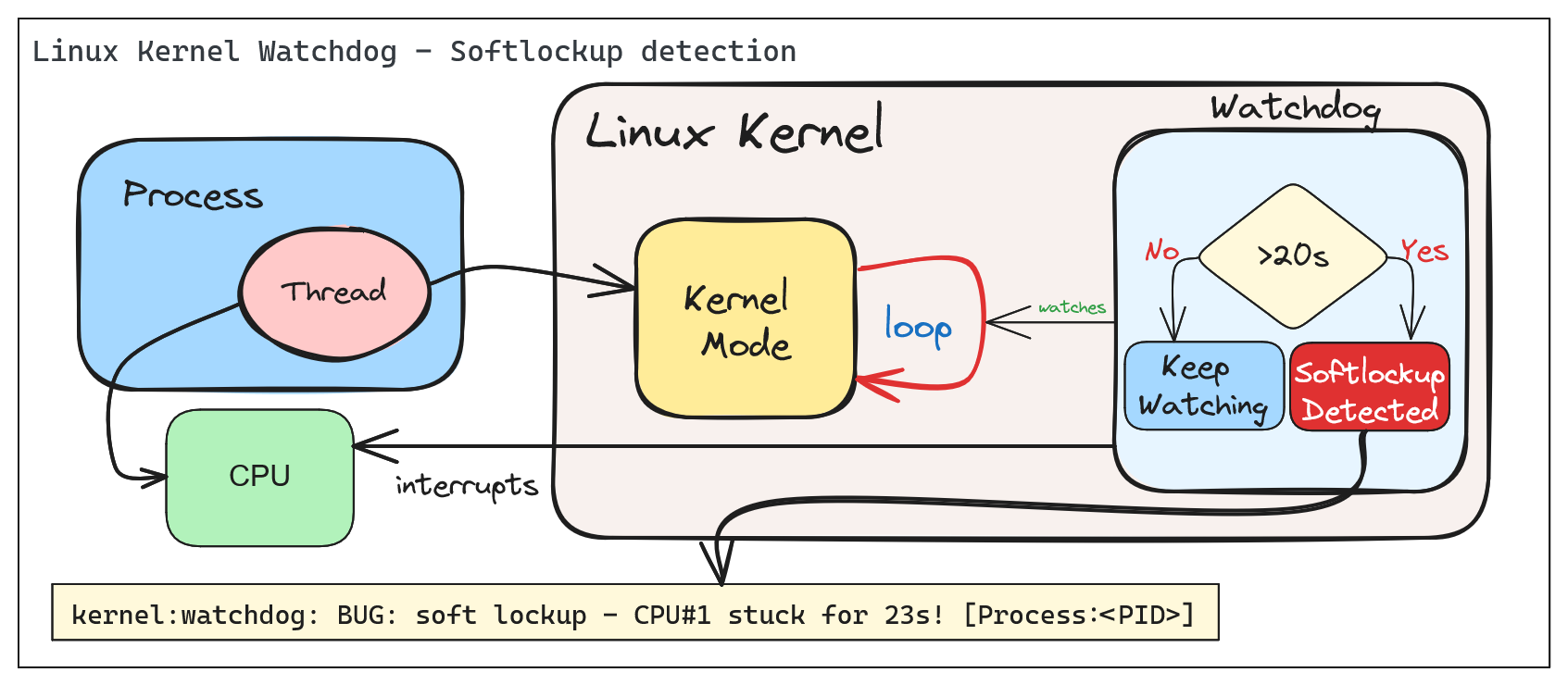

2.1 - Soft and Hard Lockups

Introduction

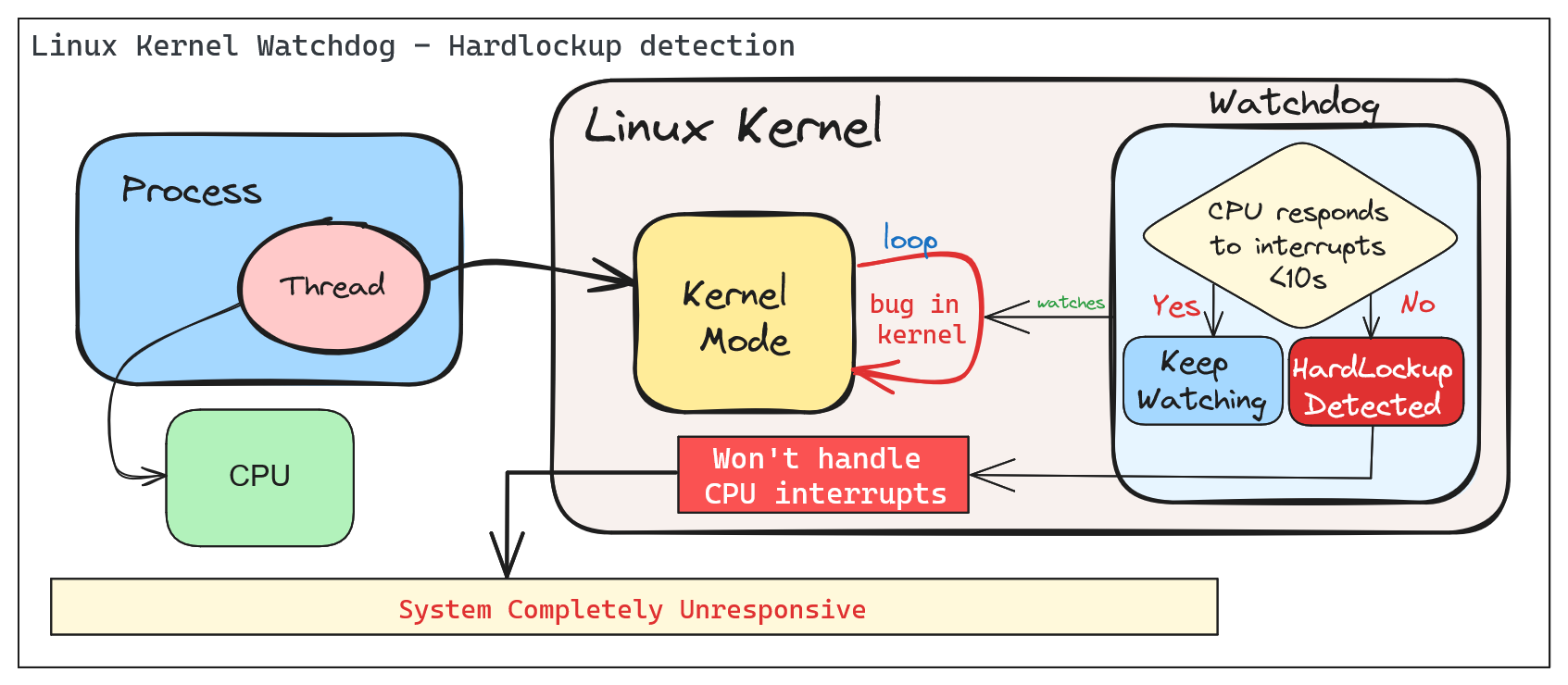

Kernel Watchdog

According to Linux Kernel Documentation, the Linux kernel can act as a watchdog to detect both soft and hard lockups.

The Linux Kernel Watchdog is a mechanism that monitors the system for both soft and hard lockups. It is designed to detect when a CPU is stuck in a loop and unable to make progress. When a soft lockup is detected, the watchdog will print a warning message to the system log.

Soft vs. Hard Lockups

Soft Lockup

A ‘softlockup’ is defined as a bug that causes the kernel to loop in kernel mode for more than 20 seconds, without giving other tasks a chance to run. The current stack trace is displayed upon detection and, by default, the system will stay locked up.

By default the system will display the stack trace and stay locked up. Watchdog can be configured to reboot the system automatically when a soft lockup is detected. This can be done by setting the softlockup_panic parameter to a value greater than zero.

Common error messages:

BUG: soft lockup - CPU#0 stuck for 22s! [swapper/0:1]

Modules linked in: ...

Hard Lockup

A ‘hardlockup’ is defined as a bug that causes the CPU to loop in kernel mode for more than 10 seconds, without letting other interrupts have a chance to run.

Similar to softlockup, the system will display the stack trace and stay locked up. The watchdog can be configured to reboot the system automatically when a hard lockup is detected. This can be done by setting the hardlockup_panic parameter to a value greater than zero.

Common error messages:

BUG: hard lockup - CPU#0 stuck for 22s! [swapper/0:1]

Modules linked in: ...

Deep Dive into Soft/Hard Lockups

Reacting to soft/hard lockups

Ok, so you have a soft or hard lockup, what now? Here are some steps you can take to troubleshoot and resolve the issue:

The Basics

1. Check the system logs: Look for any error messages or warnings that may indicate the cause of the lockup.

2. Check the CPU load: High CPU load can cause lockups. Use tools like top or htop to monitor CPU usage.

3. Read the watchdog warning message: The watchdog will print a warning message to the system log when a lockup is detected. This message may provide clues as to the cause of the lockup. Usually, the message will include a stack trace that shows where the CPU is stuck, also the name of the process that caused the lockup and it’s PID.

Thresholds

The watchdog has default thresholds for detecting soft and hard lockups. These thresholds can be adjusted to suit your system’s needs. Before changing thresholds, it is important to understand the implications of changing these values. Setting the thresholds too low may result in false positives, while setting them too high may result in missed lockups.

A periodic hrtimer runs to generate interrupts and kick the watchdog job. An NMI perf event is generated every “watchdog_thresh” (compile-time initialized to 10 and configurable through sysctl of the same name) seconds to check for hardlockups. If any CPU in the system does not receive any hrtimer interrupt during that time the ‘hardlockup detector’ (the handler for the NMI perf event) will generate a kernel warning or call panic, depending on the configuration. The watchdog job runs in a stop scheduling thread that updates a timestamp every time it is scheduled. If that timestamp is not updated for 2*watchdog_thresh seconds (the softlockup threshold) the ‘softlockup detector’ (coded inside the hrtimer callback function) will dump useful debug information to the system log, after which it will call panic if it was instructed to do so or resume execution of other kernel code.

Check current threshold:

cat /proc/sys/kernel/watchdog_thresh

10

Update threshold to 30 seconds:

# For temporary change

echo 30 > /proc/sys/kernel/watchdog_thresh

# For permanent change

echo "kernel.watchdog_thresh = 30" >> /etc/sysctl.conf

sysctl -p

Advanced Troubleshooting

The watchdog can be configured to panic the system when a lockup is detected. This can be done by setting the softlockup_panic or hardlockup_panic parameters to a value greater than zero.:

# Enable panic on soft lockup

echo 1 > /proc/sys/kernel/softlockup_panic

# Enable panic on hard lockup

echo 1 > /proc/sys/kernel/hardlockup_panic

In combination with crash dump, you can get a full dump of the system state when a lockup is detected. This can be useful for debugging the issue.

Step-by-step guide for this recipe: 1. Enable crash dump in the kernel configuration. Make sure that it’s collecting memory dumps when a kernel panic occurs. 2. Configure the kernel to reboot the system when a lockup is detected. 3. Proceed by analyzing the crash dump to identify the cause of the lockup.

Demo - Creating a Softlockup and understanding the watchdog output

Simulating a Soft Lockup

For the example below, we will simulate a soft lockup by loading a kernel module design to test if watchdog and lockup detection are working as expected.

Test module to generate lockups: CONFIG_TEST_LOCKUP

Note: When installing Linux Kernel modules, make sure: You are using the correct version of the kernel module for your kernel version. (Browse kernel config source code and match your kernel version: Example for v6.9.5). And, that you have the necessary kernel headers installed.

The following parameters are available to simulate a variety of lockups:

# modinfo soft.ko

filename: /root/CONFIG_TEST_LOCKUP/soft.ko

description: Test module to generate lockups

author: Konstantin Khlebnikov <[email protected]>

license: GPL

srcversion: 302B4AE69F898F7B25CABF8

depends:

retpoline: Y

name: soft

vermagic: 5.15.0-1064-azure SMP mod_unload modversions

parm: time_secs:lockup time in seconds, default 0 (uint)

parm: time_nsecs:nanoseconds part of lockup time, default 0 (uint)

parm: cooldown_secs:cooldown time between iterations in seconds, default 0 (uint)

parm: cooldown_nsecs:nanoseconds part of cooldown, default 0 (uint)

parm: iterations:lockup iterations, default 1 (uint)

parm: all_cpus:trigger lockup at all cpus at once (bool)

parm: state:wait in 'R' running (default), 'D' uninterruptible, 'K' killable, 'S' interruptible state (charp)

parm: use_hrtimer:use high-resolution timer for sleeping (bool)

parm: iowait:account sleep time as iowait (bool)

parm: lock_read:lock read-write locks for read (bool)

parm: lock_single:acquire locks only at one cpu (bool)

parm: reacquire_locks:release and reacquire locks/irq/preempt between iterations (bool)

parm: touch_softlockup:touch soft-lockup watchdog between iterations (bool)

parm: touch_hardlockup:touch hard-lockup watchdog between iterations (bool)

parm: call_cond_resched:call cond_resched() between iterations (bool)

parm: measure_lock_wait:measure lock wait time (bool)

parm: lock_wait_threshold:print lock wait time longer than this in nanoseconds, default off (ulong)

parm: disable_irq:disable interrupts: generate hard-lockups (bool)

parm: disable_softirq:disable bottom-half irq handlers (bool)

parm: disable_preempt:disable preemption: generate soft-lockups (bool)

parm: lock_rcu:grab rcu_read_lock: generate rcu stalls (bool)

parm: lock_mmap_sem:lock mm->mmap_lock: block procfs interfaces (bool)

parm: lock_rwsem_ptr:lock rw_semaphore at address (ulong)

parm: lock_mutex_ptr:lock mutex at address (ulong)

parm: lock_spinlock_ptr:lock spinlock at address (ulong)

parm: lock_rwlock_ptr:lock rwlock at address (ulong)

parm: alloc_pages_nr:allocate and free pages under locks (uint)

parm: alloc_pages_order:page order to allocate (uint)

parm: alloc_pages_gfp:allocate pages with this gfp_mask, default GFP_KERNEL (uint)

parm: alloc_pages_atomic:allocate pages with GFP_ATOMIC (bool)

parm: reallocate_pages:free and allocate pages between iterations (bool)

parm: file_path:file path to test (string)

parm: lock_inode:lock file -> inode -> i_rwsem (bool)

parm: lock_mapping:lock file -> mapping -> i_mmap_rwsem (bool)

parm: lock_sb_umount:lock file -> sb -> s_umount (bool)

We will simulate a soft lockup by loading the module with the following parameters:

insmod soft.ko time_secs=35 iterations=1 all_cpus=0 state="R"

Where:

time_secs=35- Lockup time in secondsiterations=1- Lockup iterationsall_cpus=0- Trigger lockup at all CPUs at oncestate="R"- Wait in ‘R’ running state

After loading the module, the system will be locked up for 35 seconds. The watchdog will detect the soft lockup and print a warning message to the system log.

Understanding the watchdog output

The watchdog warning message

[ 568.503455] watchdog: BUG: soft lockup - CPU#1 stuck for 26s! [insmod:5912]

[ 568.508018] Modules linked in: soft(OE+) nls_iso8859_1 kvm_intel kvm crct10dif_pclmul crc32_pclmul ghash_clmulni_intel binfmt_misc sha256_ssse3 sha1_ssse3 aesni_intel crypto_simd cryptd joydev hid_generic serio_raw hyperv_drm drm_kms_helper syscopyarea hid_hyperv sysfillrect sysimgblt hid fb_sys_fops hv_netvsc hyperv_keyboard cec rc_core sch_fq_codel drm i2c_core efi_pstore ip_tables x_tables autofs4

The watchdog warning message includes the following information:

BUG: soft lockup- Indicates that a soft lockup was detectedCPU#1 stuck for 26s!- Indicates that CPU#1 was stuck for 26 seconds[insmod:5912]- Indicates that the process with PID 5912 caused the lockup (in this case, theinsmodprocess)Modules linked in (...)- Lists the kernel modules that were loaded at the time of the lockup

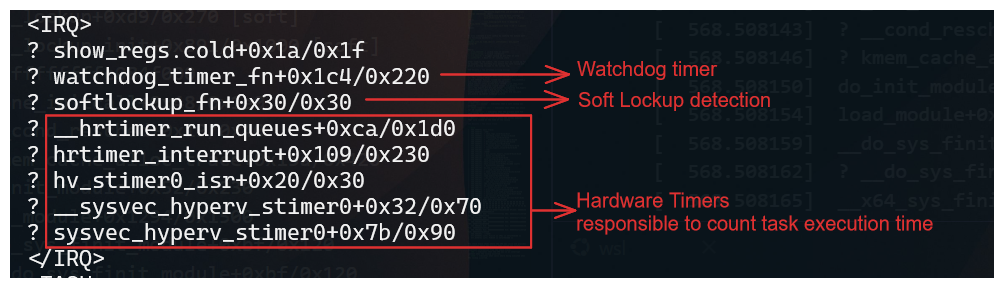

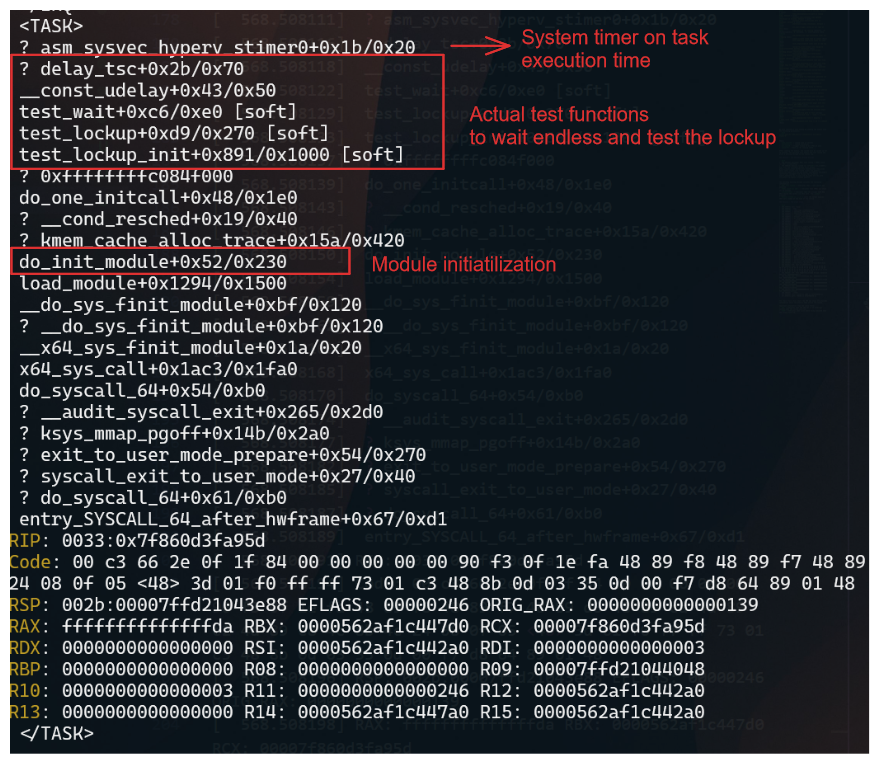

The stack trace

[ 568.508074] Call Trace:

[ 568.508075] <IRQ>

[ 568.508079] ? show_regs.cold+0x1a/0x1f

[ 568.508085] ? watchdog_timer_fn+0x1c4/0x220

[ 568.508089] ? softlockup_fn+0x30/0x30

[ 568.508092] ? __hrtimer_run_queues+0xca/0x1d0

[ 568.508095] ? hrtimer_interrupt+0x109/0x230

[ 568.508097] ? hv_stimer0_isr+0x20/0x30

[ 568.508101] ? __sysvec_hyperv_stimer0+0x32/0x70

[ 568.508104] ? sysvec_hyperv_stimer0+0x7b/0x90

[ 568.508109] </IRQ>

[ 568.508110] <TASK>

[ 568.508111] ? asm_sysvec_hyperv_stimer0+0x1b/0x20

[ 568.508116] ? delay_tsc+0x2b/0x70

[ 568.508118] __const_udelay+0x43/0x50

[ 568.508122] test_wait+0xc6/0xe0 [soft]

[ 568.508129] test_lockup+0xd9/0x270 [soft]

[ 568.508133] test_lockup_init+0x891/0x1000 [soft]

[ 568.508137] ? 0xffffffffc084f000

[ 568.508139] do_one_initcall+0x48/0x1e0

[ 568.508143] ? __cond_resched+0x19/0x40

[ 568.508146] ? kmem_cache_alloc_trace+0x15a/0x420

[ 568.508150] do_init_module+0x52/0x230

[ 568.508154] load_module+0x1294/0x1500

[ 568.508159] __do_sys_finit_module+0xbf/0x120

[ 568.508162] ? __do_sys_finit_module+0xbf/0x120

[ 568.508165] __x64_sys_finit_module+0x1a/0x20

[ 568.508168] x64_sys_call+0x1ac3/0x1fa0

[ 568.508170] do_syscall_64+0x54/0xb0

[ 568.508174] ? __audit_syscall_exit+0x265/0x2d0

[ 568.508177] ? ksys_mmap_pgoff+0x14b/0x2a0

[ 568.508182] ? exit_to_user_mode_prepare+0x54/0x270

[ 568.508185] ? syscall_exit_to_user_mode+0x27/0x40

[ 568.508187] ? do_syscall_64+0x61/0xb0

[ 568.508189] entry_SYSCALL_64_after_hwframe+0x67/0xd1

[ 568.508191] RIP: 0033:0x7f860d3fa95d

[ 568.508194] Code: 00 c3 66 2e 0f 1f 84 00 00 00 00 00 90 f3 0f 1e fa 48 89 f8 48 89 f7 48 89 d6 48 89 ca 4d 89 c2 4d 89 c8 4c 8b 4c 24 08 0f 05 <48> 3d 01 f0 ff ff 73 01 c3 48 8b 0d 03 35 0d 00 f7 d8 64 89 01 48

[ 568.508196] RSP: 002b:00007ffd21043e88 EFLAGS: 00000246 ORIG_RAX: 0000000000000139

[ 568.508198] RAX: ffffffffffffffda RBX: 0000562af1c447d0 RCX: 00007f860d3fa95d

[ 568.508199] RDX: 0000000000000000 RSI: 0000562af1c442a0 RDI: 0000000000000003

[ 568.508200] RBP: 0000000000000000 R08: 0000000000000000 R09: 00007ffd21044048

[ 568.508201] R10: 0000000000000003 R11: 0000000000000246 R12: 0000562af1c442a0

[ 568.508202] R13: 0000000000000000 R14: 0000562af1c447a0 R15: 0000562af1c442a0

[ 568.508204] </TASK>

[ 577.859869] soft: Finish on CPU1 in 34727950300 ns

[ 577.859874] soft: FINISH in 34727963600 ns

The stack trace shows where the CPU was stuck and provides information about the process that caused the lockup.

Important information from the stack trace:

IRQ- Indicates that the CPU was in an interrupt context when the lockup occurred.

TASK- Indicates what calls where being executed when the lockup occurred.

When comparing with source code, you can identify the function that caused the lockup. In this case, the test_wait function in the soft module caused the lockup.

static void test_wait(unsigned int secs, unsigned int nsecs)

{

if (wait_state == TASK_RUNNING) {

if (secs)

mdelay(secs * MSEC_PER_SEC);

if (nsecs)

ndelay(nsecs);

return;

}

__set_current_state(wait_state);

if (use_hrtimer) {

ktime_t time;

time = ns_to_ktime((u64)secs * NSEC_PER_SEC + nsecs);

schedule_hrtimeout(&time, HRTIMER_MODE_REL);

} else {

schedule_timeout(secs * HZ + nsecs_to_jiffies(nsecs));

}

}

Why did it hang?

- The

test_waitfunction was waiting in theTASK_RUNNINGstate, which caused the CPU to be stuck in a loop. - Since we are loading a module, the execution will be in kernel mode, and the watchdog will detect the lockup.

- The mdelay function was used to delay the execution of the function for a specified number of milliseconds. In this case, the function was delayed for 35 seconds, which caused the lockup.

Final Thoughts

The Linux Kernel Watchdog is a powerful tool for detecting soft and hard lockups. By understanding how the watchdog works and how to react to lockups, you can troubleshoot and resolve issues more effectively. Remember to check the system logs, CPU load, and watchdog warning messages when a lockup occurs. By following best practices and using advanced troubleshooting techniques, you can identify the root cause of lockups and take steps to prevent them in the future.

2.2 - Why Kernel Crash Dumps Are Critical for Root Cause Analysis

Postmortem Kernel Forensics with vmcore

Summary

When the Linux kernel panics, there is no userspace stack, no application logs, and often no intact filesystems. The only canonical, lossless record of the kernel’s terminal state is the crash dump (vmcore). Without vmcore, you are constrained to heuristics and guesswork; with vmcore, you can deterministically reconstruct CPU state, task scheduling, memory allocators, locks, timers, and subsystems at the exact point of failure. This is the difference between timeline narratives and hard proof.

What a vmcore Captures (and Why It Matters)

- CPU architectural state: general-purpose registers, control registers, MSRs, per-CPU contexts.

- Full kernel virtual memory snapshot: page tables, slab caches, VFS dentries/inodes, networking stacks, block layer queues, and device driver state.

- Task list and scheduler state:

task_struct, runqueues, RT/DL classes, stop machine contexts. - Lock state:

mutex,spinlock_towners, wait queues, and contention points. - Timers/workqueues/interrupts: pending timers, softirqs, tasklets, IRQ threads, NMI backtraces.

With unstripped vmlinux and kernel debuginfo, these structures become symbol-resolved and type-aware in tools like crash and gdb.

Minimal Prerequisites for a Useful Dump

- Reserve crash kernel memory at boot:

crashkernel=auto(or a fixed size appropriate to RAM and distro guidance). - Ensure

kdumpservice is active and the dump target has write bandwidth and space (prefer raw disk/LVM or fast local FS; only use NFS/SSH if necessary). - Keep exact-matching debuginfo for the running kernel build:

- Uncompressed

vmlinuxwith full DWARF and symbols. - Matching

System.mapand all loaded module debuginfo (e.g.,kernel-debuginfo,kernel-debuginfo-commonon RHEL/Fedora;linux-image-…-dbgsymon Debian/Ubuntu repositories).

- Uncompressed

- Persist critical panic policies:

sysctl -w kernel.panic_on_oops=1

sysctl -w kernel.unknown_nmi_panic=1

sysctl -w kernel.panic_on_unrecovered_nmi=1

sysctl -w vm.panic_on_oom=2 # 1=panic on OOM, 2=panic if no killable task

sysctl -w kernel.panic=10 # auto-reboot N seconds after panic

Persist via /etc/sysctl.d/*.conf as needed. For manual testing, enable SysRq and force a controlled crash:

echo 1 | sudo tee /proc/sys/kernel/sysrq

echo c | sudo tee /proc/sysrq-trigger

Acquisition Pipeline and Size Reduction

makedumpfile can filter non-essential pages to reduce vmcore size and I/O time without destroying forensics value. Recommended options:

makedumpfile -l --message-level 1 \

-d 31 \

/proc/vmcore /var/crash/vmcore.filtered

-d 31drops cache pages, free pages, user pages, and unused memory; tune masks per incident.- Always retain an unfiltered copy during critical investigations if space allows.

Core Tooling

crash: purpose-built kernel postmortem shell usingvmlinuxDWARF types.gdbwithvmlinux: useful for advanced symbol work and scripted analysis.vmcore-dmesg: extracts oops logs and last-kmsg from the dump.

Launch crash with debuginfo and module path:

crash /usr/lib/debug/lib/modules/$(uname -r)/vmlinux /var/crash/vmcore

Typical initial commands in crash:

sys # kernel, uptime, panic info

ps # task list summary

bt # backtrace of current task (set with 'set' or '-p PID')

log # kernel ring buffer extracted from vmcore

kmem -i # memory info: zones, nodes, reclaimers

files -p <PID> # per-process file descriptors

dev -d # device list & drivers

irq # IRQ and softirq state

foreach bt # backtrace all tasks (can be heavy on large systems)

Example 1 — Kernel Bug/Oops Leading to Panic

Symptoms at runtime: abrupt reboot, serial console shows BUG/oops with taint flags; no userspace core dumps.

Postmortem workflow:

vmcore-dmesg /var/crash/vmcore | less

Look for signatures such as:

BUG: unable to handle kernel NULL pointer dereference at 0000000000000010

RIP: 0010:driver_xyz_process+0x5a/0x120 [driver_xyz]

Call Trace:

worker_thread+0x8f/0x1a0

kthread+0xef/0x120

ret_from_fork+0x2c/0x40

Tainted: G B W OE 5.14.0-xyz #1

Correlate symbols and inspect the faulting frame:

crash> sym driver_xyz_process

crash> dis -l driver_xyz_process+0x5a

crash> bt

crash> set -p <pid_of_worker>

crash> bt -f # show full frames with arguments

crash> struct task_struct <task_addr>

Representative outputs:

# vmcore-dmesg (panic excerpt)

[ 1234.567890] Kernel panic - not syncing: Fatal exception

[ 1234.567891] CPU: 7 PID: 4123 Comm: kworker/u16:2 Tainted: G B W OE 5.14.0-xyz #1

[ 1234.567893] Hardware name: Generic XYZ/ABC, BIOS 1.2.3 01/01/2025

[ 1234.567895] Workqueue: events_unbound driver_xyz_wq

[ 1234.567897] RIP: 0010:driver_xyz_process+0x5a/0x120 [driver_xyz]

[ 1234.567901] Call Trace:

[ 1234.567905] worker_thread+0x8f/0x1a0

[ 1234.567906] kthread+0xef/0x120

[ 1234.567907] ret_from_fork+0x2c/0x40

crash> sys

KERNEL: /usr/lib/debug/lib/modules/5.14.0-xyz/vmlinux

DUMPFILE: /var/crash/vmcore [PARTIAL DUMP]

CPUS: 32

DATE: Tue Oct 14 10:22:31 2025

UPTIME: 02:14:58

LOAD AVERAGE: 6.14, 6.02, 5.77

PANIC: "Kernel panic - not syncing: Fatal exception"

PID: 4123

COMMAND: "kworker/u16:2"

TASK: ffff8b2a7f1f0c00 [THREAD_INFO: ffffb2f1c2d2a000]

CPU: 7

STATE: TASK_RUNNING (PANIC)

crash> ps

PID PPID CPU TASK ST %MEM VSZ RSS COMM

> 4123 2 7 ffff8b2a7f1f0c00 RU 0.1 0 0 kworker/u16:2

1 0 0 ffff8b2a70000180 IN 0.0 16272 1308 systemd

532 1 2 ffff8b2a703f9b40 IN 0.2 912312 80324 containerd

987 532 5 ffff8b2a7a2fcd00 IN 0.4 1452312 231212 kubelet

crash> bt

PID: 4123 TASK: ffff8b2a7f1f0c00 CPU: 7 COMMAND: "kworker/u16:2"

#0 [ffffb2f1c2d2be78] machine_kexec at ffffffff914b3e10

#1 [ffffb2f1c2d2bec8] __crash_kexec at ffffffff915a1c32

#2 [ffffb2f1c2d2bf28] panic at ffffffff914c2a9d

#3 [ffffb2f1c2d2bf80] oops_end at ffffffff9148df90

#4 [ffffb2f1c2d2bfb0] page_fault_oops at ffffffff9148e4b5

#5 [ffffb2f1c2d2bfe0] exc_page_fault at ffffffff91abc7e1

#6 [ffffb2f1c2d2c018] asm_exc_page_fault at ffffffff91c0133e

#7 [ffffb2f1c2d2c048] driver_xyz_process+0x5a/0x120 [driver_xyz]

#8 [ffffb2f1c2d2c0a0] worker_thread+0x8f/0x1a0

#9 [ffffb2f1c2d2c0e0] kthread+0xef/0x120

#10 [ffffb2f1c2d2c110] ret_from_fork+0x2c/0x40

crash> kmem -i

PAGES TOTAL PERCENTAGE

TOTAL MEM 3276800 12.5 GB 100%

FREE 152345 595 MB 4%

USED 3124455 11.9 GB 96%

SHARED 80312 313 MB 2%

BUFFERS 49152 192 MB 1%

CACHED 842304 3.2 GB 26%

SLAB 921600 3.5 GB 28%

PAGECACHE 655360 2.5 GB 20%

ZONE DMA32: min 16224, low 20280, high 24336, scanned 1e6, order 3 allocs failing

Reclaimers: kswapd0: active, direct reclaim: observed

crash> log | head -n 6

<0>[ 1234.567890] Kernel panic - not syncing: Fatal exception

<4>[ 1234.567900] CPU: 7 PID: 4123 Comm: kworker/u16:2 Tainted: G B W OE

<4>[ 1234.567905] RIP: 0010:driver_xyz_process+0x5a/0x120 [driver_xyz]

<6>[ 1234.567950] Workqueue: events_unbound driver_xyz_wq

Actionable patterns:

- Null-dereference at a deref site → check expected invariants and lifetime rules for the object; validate RCU usage (

rcu_read_lock()/_unlock()pairs) and reference counting (kref,refcount_t). - Use-after-free → examine slab allocator metadata around the pointer;

kmemandrd -p(raw reads) can validate freelist poisoning. - Interrupt vs thread context → verify hardirq/softirq context in

bt; ensure lock acquisition order obeys documented lockdep dependencies.

If tainted by proprietary modules (OE), ensure matching module debuginfo is loaded so frames resolve cleanly. Validate module list:

crash> mod

From here, produce a minimal repro and map the faulting path to specific source lines using dis -l and DWARF line tables; attach exact register state and call trace to the fix.

Example 2 — Out-Of-Memory (OOM) and Panic-on-OOM

By default, OOM does not produce a vmcore because the kernel kills a task to free memory and continues. For deterministic forensics on pathological memory pressure, set vm.panic_on_oom=1 or 2 so the system panics and kdump captures a vmcore.

Pre-incident configuration:

sysctl -w vm.panic_on_oom=2

sysctl -w kernel.panic=15

After the event, extract the OOM report:

vmcore-dmesg /var/crash/vmcore | grep -A40 -B10 -n "Out of memory"

Example OOM excerpt:

[ 4321.000001] Out of memory: Killed process 29876 (jav a) total-vm:16384000kB, anon-rss:15500000kB, file-rss:12000kB, shmem-rss:0kB, UID:1000 pgtables:30240kB oom_score_adj:0

[ 4321.000015] oom_reaper: reaped process 29876 (java), now anon-rss:0kB, file-rss:0kB, shmem-rss:0kB

[ 4321.000120] Memory cgroup out of memory: Killed process 30555 (python) in cgroup /kubepods/besteffort/pod123/xyz

[ 4321.000200] Node 0 DMA32: free:152kB min:16224kB low:20280kB high:24336kB active_anon:10123456kB inactive_anon:1123456kB active_file:123456kB inactive_file:654321kB unevictable:0kB

[ 4321.000250] kswapd0: node 0, oom: task failed order:3, mode:0x24201ca(GFP_HIGHUSER_MOVABLE|__GFP_ZERO)

Selected crash views for OOM analysis:

crash> kmem -i

PAGES TOTAL PERCENTAGE

TOTAL MEM 3276800 12.5 GB 100%

FREE 20480 80 MB 0%

USED 3256320 12.4 GB 99%

CACHED 131072 512 MB 4%

SLAB 983040 3.7 GB 30%

Direct reclaim active; high-order allocations failing (order:3)

crash> ps -m | head -n 5

PID VSZ RSS COMM

29876 16384000 15500000 java

30555 2048000 1800000 python

987 1452312 231212 kubelet

Interpretation checklist inside crash:

crash> kmem -i # zones, watermarks, reclaimers state

crash> kmem -s # slab usage; look for runaway caches

crash> ps -m # memory stats per task

crash> vtop <task> <va> # translate VA to PFN to inspect mapping

crash> files -p <PID> # fd pressure and mmaps

crash> p sysctl_oom_dump_tasks

crash> log # OOM killer selection rationale, constraints

Indicators:

oom-kill:constraint=CONSTRAINT_NONE,nodemask=(null),cpuset=...shows the policy path;score/oom_score_adjdetermine the victim.- Stalled reclaim (

kswapd,direct reclaim) with highorderallocations failing → likely hugepages, GFP_ATOMIC depletion, or CMA stress. - One slab consuming disproportionate memory → e.g., runaway

dentryorkmalloc-64due to leak; confirm withkmem -Sand inspect suspects via object walkers if available.

If OOM was triggered by a specific container/cgroup, use cgroup-aware views (kernel dependent):

crash> p memory.stat @<memcg_addr>

Correlating vmcore with Source and Binaries

Always analyze with the exact build artifacts of the panicked kernel:

vmlinuxand module.debugfiles must match theuname -rand build ID of the running kernel at the time of panic.- Mismatches lead to wrong type layouts, invalid offsets, and misleading backtraces.

- On distros with split debuginfo, install the

debuginfopackages for the precise NVR (Name-Version-Release) string.

Crash Analysis Cheat Sheet

vmcore-dmesg: panic reason, oops, OOM logs; fastest high-signal overview.sys: kernel build, CPU count, uptime, panic string, current task/CPU.ps/ps -m: runnable tasks;-madds memory stats per task.bt/bt -f: backtrace of current or selected task with frames/args.kmem -i/-s/-S: memory inventory; slabs by cache; cache detail.log: kernel ring buffer reconstructed from vmcore.mod: loaded modules and taint state.files -p <PID>: file descriptors and mmaps for a task.irq: hardirq/softirq state.vtop <task> <va>: VA→PFN translation; inspect mappings around suspect pages.

References and Further Reading

- Crash utility: https://crash-utility.github.io/

- makedumpfile project: https://github.com/makedumpfile/makedumpfile

- Upstream kdump guide: https://www.kernel.org/doc/html/latest/admin-guide/kdump/kdump.html

- Ubuntu Kernel Crash Dump Recipe: https://wiki.ubuntu.com/Kernel/CrashdumpRecipe

- Fedora kdump guide: https://docs.fedoraproject.org/en-US/fedora-coreos/debugging-kernel-crashes/

2.3 - Enabling Automatic Kernel Crash Collection with kdump

Automatic Enablement of Kernel Crash Dump Collection with kdump-enabler

This article explains how to automatically enable and configure kernel crash dump (kdump) collection on Linux systems using the kdump-enabler script. This approach works across multiple distributions and simplifies the process of preparing your system to collect crash dumps for troubleshooting and analysis.

Overview

kdump-enabler is a Bash script that automates the setup of kdump:

- Installs required packages

- Configures the crashkernel parameter in GRUB

- Enables and starts the kdump service

- Sets up SysRq for manual crash triggering

- Creates backups of configuration files before changes

- Supports Ubuntu, Debian, RHEL, CentOS, Fedora, openSUSE, Arch Linux, and more

Prerequisites

- Root privileges (run with

sudo) - systemd-based Linux distribution

- GRUB bootloader

- Sufficient disk space in

/var/crashfor crash dumps

Installation

Clone the repository and run the script:

git clone https://github.com/samatild/kdump-enabler.git

cd kdump-enabler

sudo ./kdump-enabler.sh

Or download and run directly:

curl -O https://raw.githubusercontent.com/samatild/kdump-enabler/main/kdump-enabler.sh

chmod +x kdump-enabler.sh

sudo ./kdump-enabler.sh

Usage

Run the script interactively:

sudo ./kdump-enabler.sh

Or use options for automation:

sudo ./kdump-enabler.sh -y # Non-interactive mode

sudo ./kdump-enabler.sh --check-only # Only check current configuration

sudo ./kdump-enabler.sh --no-sysrq # Skip SysRq crash enablement

What the Script Does

- Detects your Linux distribution and package manager

- Checks current kdump status

- Installs required packages

- Configures crashkernel parameter in GRUB based on system RAM

- Enables kdump service at boot and starts it

- Enables SysRq for manual crash triggering

- Creates crash dump directory at

/var/crash

Post-Installation

After running the script, reboot your system for the crashkernel parameter to take effect:

sudo reboot

Verify kdump is working:

- Ubuntu/Debian:

sudo kdump-tools test sudo systemctl status kdump-tools - RHEL/CentOS/Fedora:

sudo kdumpctl showmem sudo systemctl status kdump - Check crashkernel:

cat /proc/cmdline | grep crashkernel - Check SysRq:

cat /proc/sys/kernel/sysrq # Should output: 1

Examples

Below are examples of running the script on different distributions and with various options, along with the kinds of output you can expect.

Interactive run (Ubuntu/Debian)

sudo ./kdump-enabler.sh

# Output (abridged):

╔══════════════════════════════════════════════════════════════╗

║ KDUMP ENABLER v1.0.0 ║

╚══════════════════════════════════════════════════════════════╝

[INFO] Detecting Linux distribution...

[SUCCESS] Detected: Ubuntu 22.04

[INFO] Package manager: apt

[INFO] Checking current kdump configuration...

[WARNING] No crashkernel parameter found in kernel command line

[WARNING] kdump service exists but is not active

[WARNING] System requires kdump configuration

[WARNING] This script will:

1. Install kdump packages (linux-crashdump kdump-tools kexec-tools)

2. Configure crashkernel parameter in GRUB

3. Enable and start kdump service

4. Enable SysRq crash trigger

5. Require a system reboot to complete setup

Do you want to continue? [y/N] y

[INFO] Installing required packages...

... apt-get update -qq

... apt-get install -y linux-crashdump kdump-tools kexec-tools

[SUCCESS] Packages installed successfully

[INFO] Configuring crashkernel parameter...

[INFO] Recommended crashkernel size: 384M (Total RAM: 12GB)

... updating /etc/default/grub

... running update-grub

[SUCCESS] GRUB configuration updated

[INFO] Configuring kdump settings...

... setting USE_KDUMP=1 in /etc/default/kdump-tools

[SUCCESS] kdump-tools configured

[INFO] Enabling kdump service...

[SUCCESS] kdump service enabled at boot

[WARNING] kdump service will start after reboot (crashkernel parameter needs to be loaded)

[INFO] Enabling SysRq crash trigger...

[SUCCESS] SysRq enabled for current session

[SUCCESS] SysRq configuration persisted to /etc/sysctl.conf

╔════════════════════════════════════════════════════════════════╗

║ KDUMP SETUP COMPLETED ║

╚════════════════════════════════════════════════════════════════╝

IMPORTANT: A system reboot is required to apply all changes!

Non-interactive run (auto-confirm)

sudo ./kdump-enabler.sh -y

# Output differences:

# - Skips confirmation prompts

# - Performs install/configuration immediately

Check-only mode (no changes)

sudo ./kdump-enabler.sh --check-only

# Output (abridged):

[INFO] Checking current kdump configuration...

[WARNING] No crashkernel parameter found in kernel command line

[WARNING] kdump service not found

[INFO] Crash dump directory: /var/crash (0 dumps found)

# Exits after status check without installing or modifying anything

Skip SysRq enablement

sudo ./kdump-enabler.sh -y --no-sysrq

# Output differences:

# - Does not enable SysRq or persist sysctl settings

# - All other steps (packages, GRUB, service) proceed

RHEL/Fedora example highlights

sudo ./kdump-enabler.sh -y

# Output (abridged):

[SUCCESS] Detected: Red Hat Enterprise Linux 9

[INFO] Package manager: yum

[INFO] Installing required packages...

... yum install -y kexec-tools

[SUCCESS] Packages installed successfully

[INFO] Configuring crashkernel parameter...

... updating /etc/default/grub

... grub2-mkconfig -o /boot/grub2/grub.cfg

[SUCCESS] GRUB2 configuration updated

[INFO] Configuring kdump settings...

... ensuring path /var/crash in /etc/kdump.conf

... setting core_collector makedumpfile -l --message-level 1 -d 31

[SUCCESS] kdump.conf configured

Testing Crash Dumps

⚠️ Warning: The following will immediately crash your system and generate a dump.

echo c | sudo tee /proc/sysrq-trigger

After reboot, check for crash dumps:

ls -lh /var/crash/

Troubleshooting

- Ensure crashkernel is loaded:

cat /proc/cmdline | grep crashkernel - Reboot after running the script

- Check available memory and disk space

- View service logs:

sudo journalctl -u kdump -xe - Update GRUB if needed and reboot

References

For more details, see the kdump-enabler GitHub repository.

2.4 - Kernel Mode vs User Mode: Privilege Levels and System Call Execution

CPU Privilege Levels and Execution Contexts

Summary

Modern processors implement hardware-enforced privilege levels to isolate untrusted user code from critical kernel services. Linux uses two primary modes: kernel mode (ring 0, CPL 0, EL1) and user mode (ring 3, CPL 3, EL0). Kernel mode grants unrestricted access to CPU features, physical memory, I/O ports, and privileged instructions. User mode restricts access to a virtualized, isolated address space and requires kernel mediation for hardware resources. The transition between modes occurs via system calls, interrupts, and exceptions, all managed by the kernel’s interrupt and system call handlers. Understanding this separation is fundamental to security, performance optimization, and kernel debugging.

CPU Privilege Levels (Architecture Overview)

x86/x86-64 Privilege Rings

The x86 architecture defines four privilege levels (rings 0-3), though Linux uses only rings 0 and 3:

- Ring 0 (Kernel Mode): Highest privilege, executes kernel code, device drivers, interrupt handlers

- Ring 1-2: Unused by Linux (historically used for device drivers or hypervisors)

- Ring 3 (User Mode): Lowest privilege, executes user applications

Current Privilege Level (CPL): Stored in the lower 2 bits of the CS (Code Segment) register:

CPL=0: Kernel modeCPL=3: User mode

Kernel source: arch/x86/include/asm/segment.h

// Simplified CPL check macros

#define user_mode(regs) (((regs)->cs & SEGMENT_RPL_MASK) == USER_CS)

#define kernel_mode(regs) (!user_mode(regs))

ARM64 Exception Levels

ARM64 uses Exception Levels (EL0-EL3):

- EL0 (User Mode): Applications and user-space code

- EL1 (Kernel Mode): Linux kernel, hypervisor in some configurations

- EL2 (Hypervisor): Virtualization layer (KVM, Xen)

- EL3 (Secure Monitor): TrustZone secure world

Current Exception Level (EL): Stored in CurrentEL system register (bits [3:2])

Kernel source: arch/arm64/include/asm/ptrace.h

#define PSR_MODE_EL0t 0x00000000

#define PSR_MODE_EL1t 0x00000004

#define PSR_MODE_EL1h 0x00000005

Kernel Mode Characteristics

Privileges and Capabilities

1. Unrestricted Memory Access

- Direct access to physical memory addresses

- Can read/write kernel data structures

- Access to kernel address space (typically

0xffff800000000000and above on x86-64) - Can modify page tables, MMU configuration

2. Privileged Instructions

cli/sti(disable/enable interrupts)lgdt/lidt(load GDT/IDT)mov crX(control register access)in/out(I/O port access)hlt(halt CPU)wrmsr/rdmsr(Model-Specific Registers)

3. Interrupt and Exception Handling

- Can install interrupt handlers

- Access to interrupt controller (APIC, GIC)

- Exception vector table modification

4. System Control

- CPU scheduling decisions

- Process creation/destruction

- File system operations

- Network stack management

Kernel example - Direct memory access:

// Kernel can directly access physical memory

void *phys_addr = __va(0x1000000); // Convert physical to virtual

unsigned long value = *(unsigned long *)phys_addr;

// User mode cannot do this - would cause segmentation fault

Kernel Mode Execution Contexts

1. Process Context

- Executing on behalf of a user process (system call handler)

- Has associated

task_struct, user-space memory mappings - Can sleep, be preempted

- Example:

read(),write(),open()syscall handlers

2. Interrupt Context

- Executing in response to hardware interrupt

- No associated process (

currentmay point to interrupted process) - Cannot sleep, very limited blocking operations

- Must be fast and non-blocking

- Example: Network packet received, timer interrupt

3. Softirq/Tasklet Context

- Deferred interrupt processing

- Can run in interrupt context or

ksoftirqdthread - Similar constraints to interrupt context

- Example: Network packet processing, timer callbacks

Kernel source - Process context check: